Appearance

Basic RNN

RNN:循环神经网络

可以用RNN处理有一定顺序的输入。

What is RNNs?

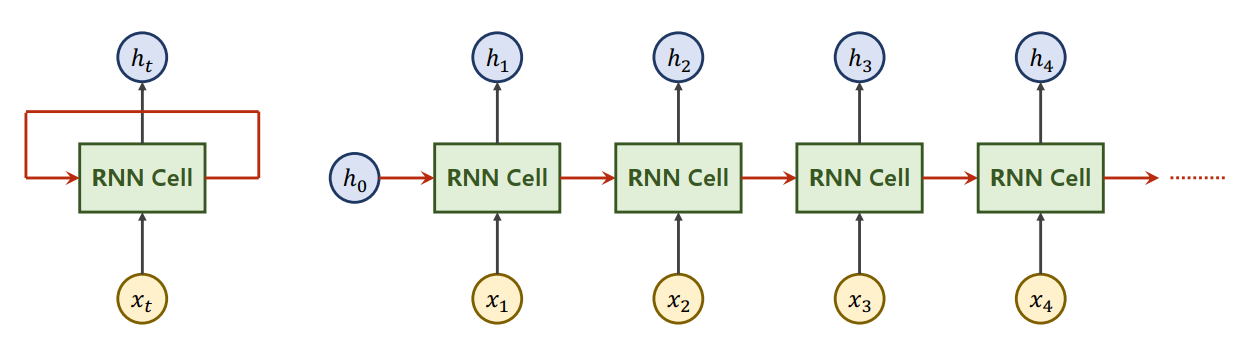

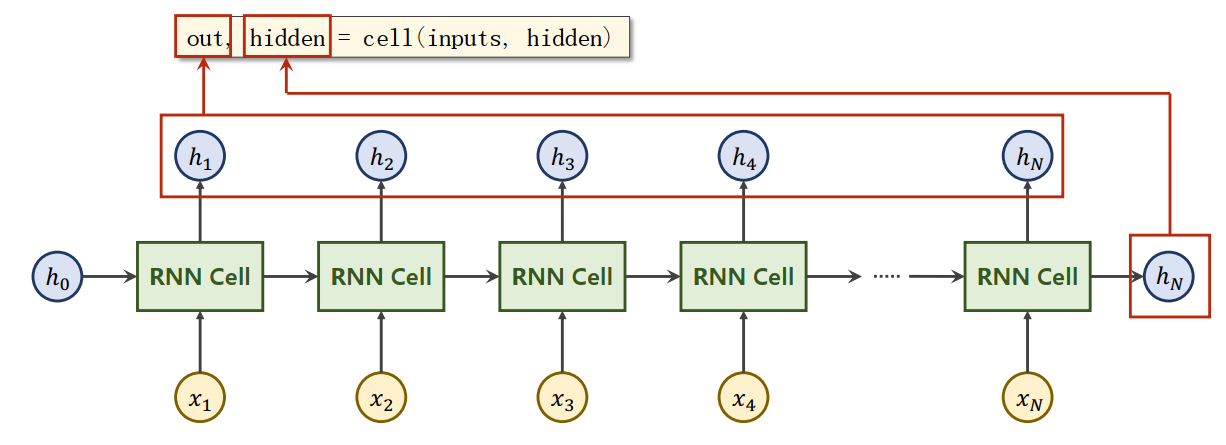

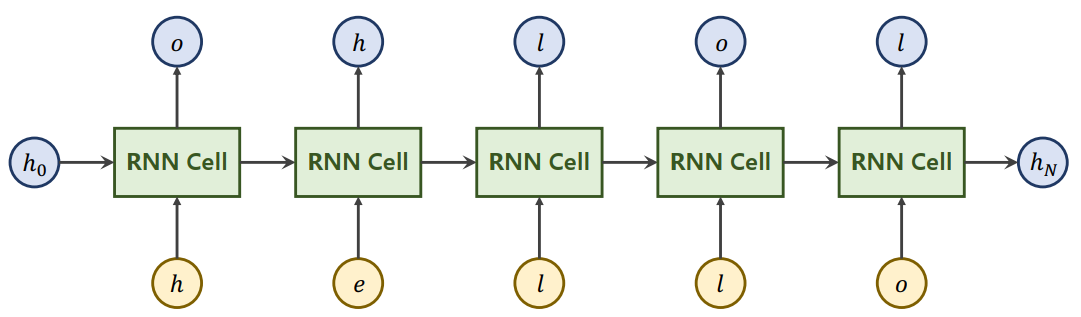

RNN Cell实际上是一个线性层,可以把一个维度的向量映射成另外一个维度的向量。经常会把RNN的结构画成左图的样子,是因为RNN Cell是共享的,展开后就是右图的样子。x是输入序列,项之间存在顺序关系,所以前一项的输出需要送入后一项的RNN Cell。对于第一个x,如果有先验知识可以将其作为送入第一项的RNN Cell,没有的话送入一个和其它h维度相同的全0向量。

大体过程可以由下述代码表示:

line = Linear()

h = 0

for x in X:

h = line(x,h)由循环实现,所以称之为循环神经网络。

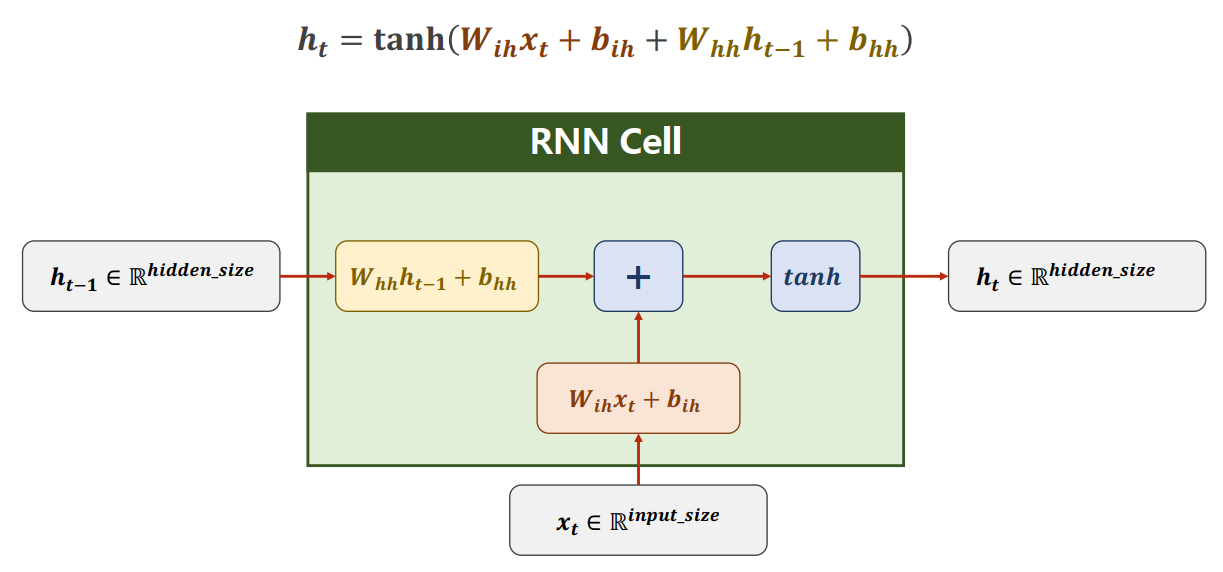

先对输入作一次线性变换,的维度应该是,把输入的维度由变为,然后加上偏置。

对上一项的输出作一次线性变换,的维度应该是,输入的维度维持不变,然后加上偏置。

把上述变换后的结果相加(信息融合),然后做激活。循环神经网络喜欢使用tanh作激活,因为tanh的范围是[-1,1],被认为是效果比较好的。

发现可以进行如下变换:

所以实际实现的过程中,会先把与拼接为的矩阵,然后使用的权重。

看似两个线性层,实际上一个线性层就可以完成。

RNN Cell in PyTorch

How to Use RNNCell

cell = torch.nn.RNNCell(input_size=input_size, hidden_size=hidden_size)

hidden = cell(input, hidden)构造数据时需要满足:

- input的维度为

- hidden的维度为

Suppose we have sequence with below properties:

- batchSize=1(批量)

- seqLen=3(序列长度)

- inputSize=4

- hiddenSize=2

So the shape of inputs and outputs of RNNCell:

- input.shape=(batchSize,inputSize)

- output.shape=(batchSize,hiddenSize)

The sequence can be wrapped in one Tensor with shape:

- dataset.shape=(seqLen,batchSize,inputSize)

import torch

batch_size = 1

seq_len = 3

input_size = 4

hidden_size = 2

cell = torch.nn.RNNCell(input_size=input_size, hidden_size=hidden_size)

# (seq, batch, features)

dataset = torch.randn(seq_len, batch_size, input_size)

hidden = torch.zeros(batch_size, hidden_size)

for idx, input in enumerate(dataset):

print('=' * 20, idx, '=' *20)

print('Input size: ',input.shape)

hidden = cell(input, hidden)

print('outputs size: ', hidden.shape)

print(hidden)How to Use RNN

cell = torch.nn.RNN(input_size=input_size, hidden_size=hidden_size, num_layers=num_layers)

out, hidden = cell(inputs, hidden)Tips

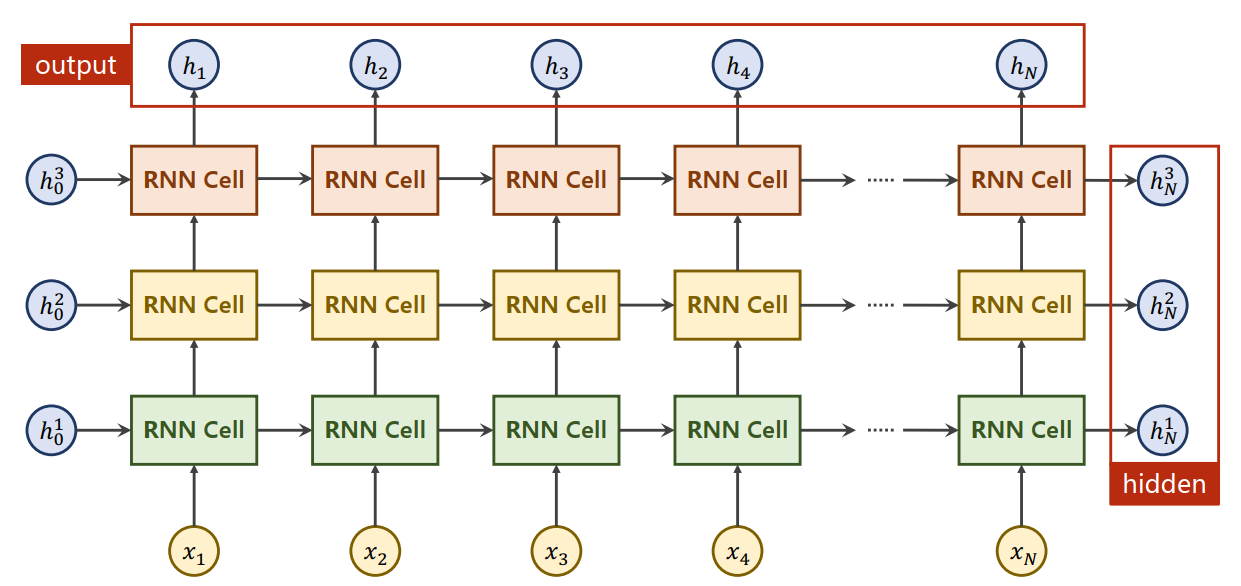

相比于RNNCell,RNN还需要参数num_layers(可以有多层RNN Cell)。

使用RNN不需要自己写循环。使用时需要把所有的input输入,输出的是所有的隐层out,以及最后的。

构造数据时需要满足:

- inputs的维度为

- hidden的维度为

输出:

- out的维度为

- hidden的维度为

Suppose we have sequence with below properties:

- batchSize

- seqLen

- inputSize, hiddenSize

- numLayers

The shape of input and h_0 of RNN:

- input.shape=(seqLen,batchSize,inputSize)

- h_0.shape=(numLayers,batchSize,hiddenSize)

The shape of output and h_n of RNN:

- output.shape=(seqLen,batchSize,hiddenSize)

- h_n.shape=(numLayers,batchSize,hiddenSize)

import torch

batch_size = 1

seq_len = 3

input_size = 4

hidden_size = 2

num_layers = 1

cell = torch.nn.RNN(input_size=input_size, hidden_size=hidden_size, num_layers=num_layers)

# (seqLen, batchSize, inputSize)

inputs = torch.randn(seq_len, batch_size, input_size)

hidden = torch.zeros(num_layers, batch_size, hidden_size)

out, hidden = cell(inputs, hidden)

print('Output size: ', out.shape)

print('Output: ', out)

print('Hidden size: ', hidden.shape)

print('Hidden: ', hidden)Tips

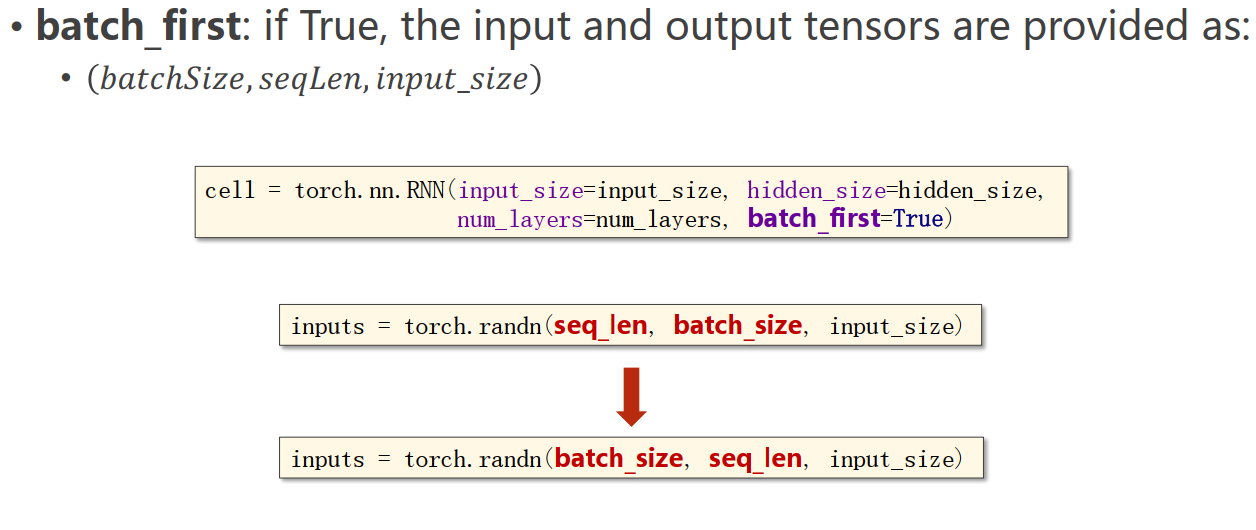

RNN的另一个可用的参数batch_first

Example: Using RNNCell

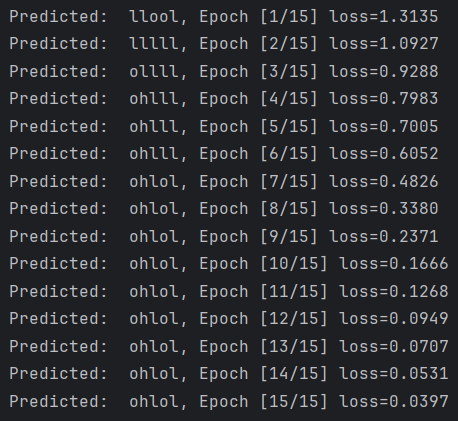

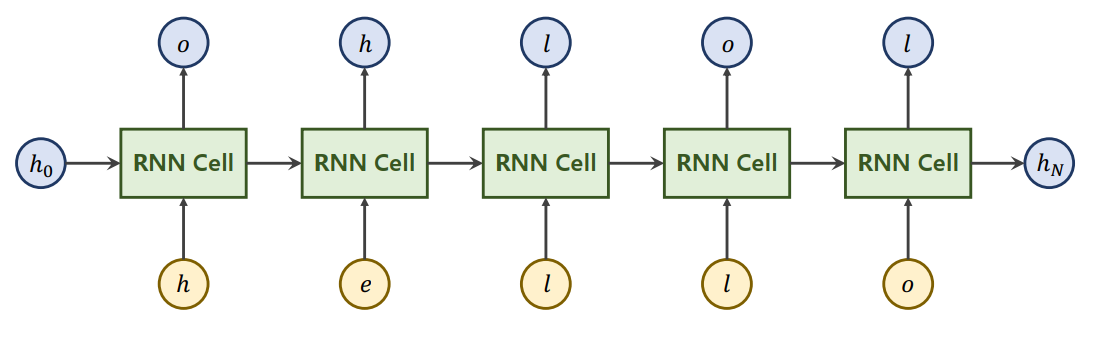

Train a model to learn: "hello" -> "ohlol"

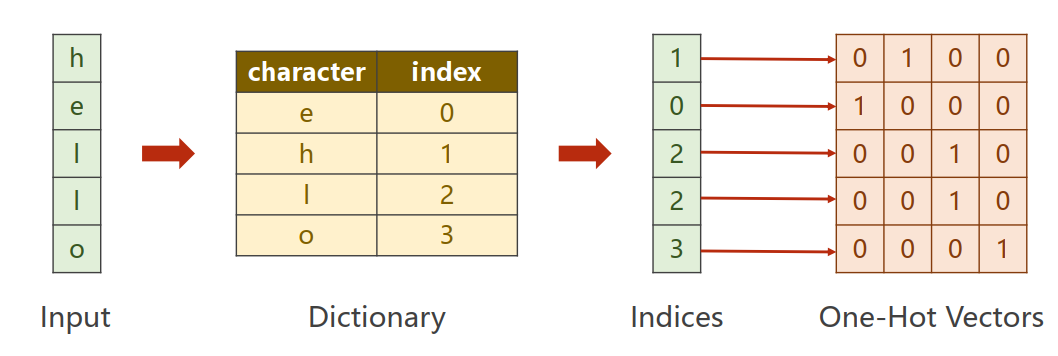

首先要将字符向量化。

- 构造一个词典,给每个字符分配一个索引

- 根据词典把每个词变成相应的索引,然后转化为向量

inputSize=4

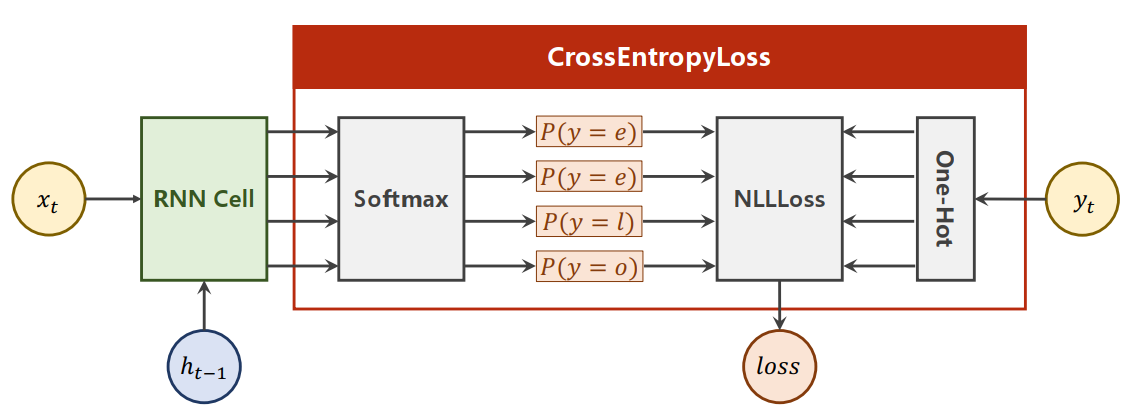

实际上这是一个分类问题,判断输出应该是哪一个字母,使用向量表示,即输出的是一个长度为4的向量。拿这个长度为4的向量接一个交叉熵就变成一个分布。

outputSize=4

RNNCell

使用RNNCell完整代码如下:

import torch

input_size = 4

hidden_size = 4

batch_size = 1

# Prepare Data

idx2char = ['e', 'h', 'l', 'o']

x_data = [1, 0, 2, 2, 3]

y_data = [3, 1, 2, 3, 2]

one_hot_lookup = [[1, 0, 0, 0],

[0, 1, 0, 0],

[0, 0, 1, 0],

[0, 0, 0, 1]]

x_one_hot = [one_hot_lookup[x] for x in x_data]

inputs = torch.Tensor(x_one_hot).view(-1, batch_size, input_size)

labels = torch.LongTensor(y_data).view(-1, 1)

# Design Model

class Model(torch.nn.Module):

def __init__(self, input_size, hidden_size, batch_size):

super().__init__()

self.batch_size = batch_size

self.input_size = input_size

self.hidden_size = hidden_size

self.rnncell = torch.nn.RNNCell(input_size=self.input_size, hidden_size=self.hidden_size)

def forward(self, input, hidden):

hidden = self.rnncell(input, hidden)

return hidden

# 生成默认的h0

def init_hidden(self):

return torch.zeros(self.batch_size, self.hidden_size)

net = Model(input_size, hidden_size, batch_size)

# Loss and Optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(), lr=0.1)

# Training Cycle

for epoch in range(15):

loss = 0

optimizer.zero_grad()

hidden = net.init_hidden()

print('Predicted string: ', end='')

for input,label in zip(inputs, labels):

hidden = net(input, hidden)

loss += criterion(hidden, label)

_, idx = hidden.max(dim=1)

print(idx2char[idx.item()],end='')

loss.backward()

optimizer.step()

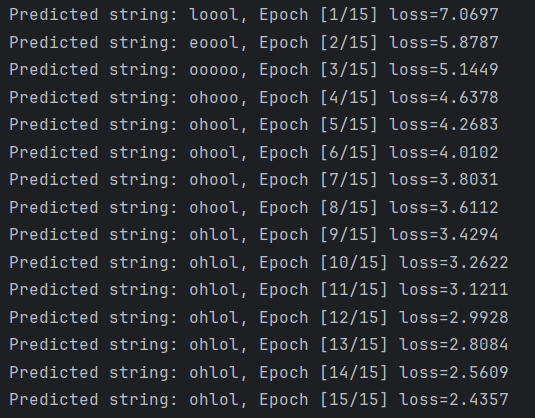

print(', Epoch [%d/15] loss=%.4f' % (epoch+1, loss.item()))运行结果:

RNN

使用RNN,需要修改数据、模型、训练周期:

import torch

input_size = 4

hidden_size = 4

num_layers = 1

batch_size = 1

seq_len = 5

# Prepare Data

idx2char = ['e', 'h', 'l', 'o']

x_data = [1, 0, 2, 2, 3]

y_data = [3, 1, 2, 3, 2]

one_hot_lookup = [[1, 0, 0, 0],

[0, 1, 0, 0],

[0, 0, 1, 0],

[0, 0, 0, 1]]

x_one_hot = [one_hot_lookup[x] for x in x_data]

inputs = torch.Tensor(x_one_hot).view(seq_len, batch_size, input_size)

labels = torch.LongTensor(y_data)

# Design Model

class Model(torch.nn.Module):

def __init__(self, input_size, hidden_size, batch_size, num_layers=1):

super().__init__()

self.num_layers = num_layers

self.batch_size = batch_size

self.input_size = input_size

self.hidden_size = hidden_size

self.rnn = torch.nn.RNN(input_size=self.input_size, hidden_size=self.hidden_size, num_layers=num_layers)

def forward(self, input):

hidden = torch.zeros(self.num_layers, self.batch_size, self.hidden_size)

out, _ = self.rnn(input, hidden)

return out.view(-1, self.hidden_size)

net = Model(input_size, hidden_size, batch_size, num_layers)

# Loss and Optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(), lr=0.1)

# Training Cycle

for epoch in range(15):

optimizer.zero_grad()

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

_, idx = outputs.max(dim=1)

idx = idx.data.numpy()

print('Predicted: ', ''.join([idx2char[x] for x in idx]), end='')

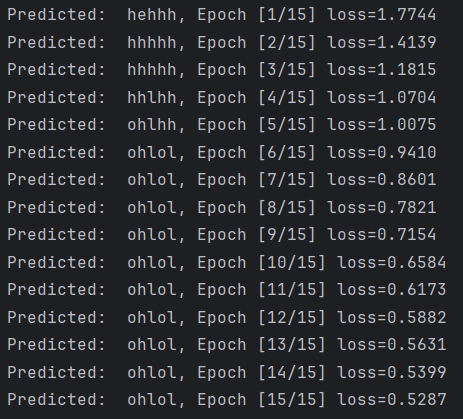

print(', Epoch [%d/15] loss=%.4f' % (epoch+1, loss.item()))运行结果:

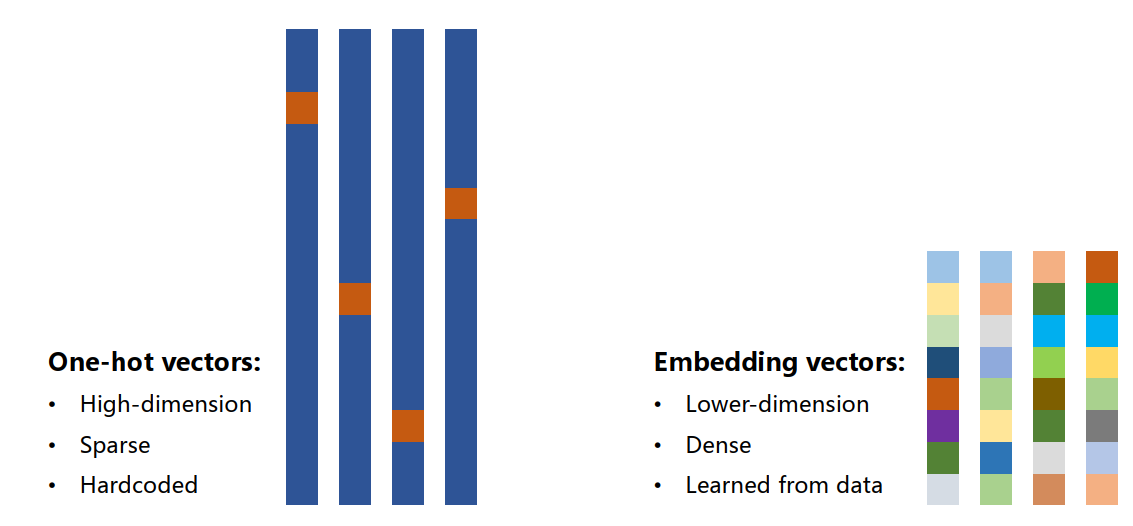

Associate a vector with a word/character

使用独热向量处理字符的缺点:

- 维度太高(维度诅咒)

- 稀疏

- 硬编码

一种常见且强大的方式:EMBEDDING(嵌入层)

EMBEDDING将高维的稀疏的样本映射到低维的稠密的空间中(数据降维):

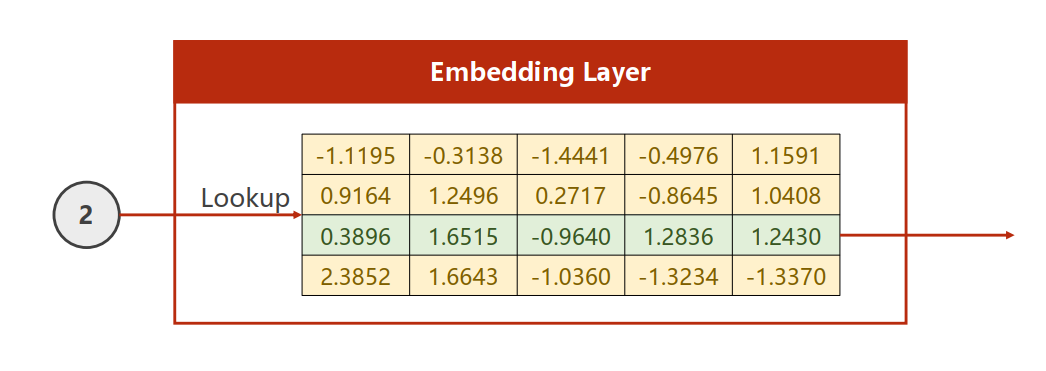

EMBEDDING是如何操作的:

EMBEDDING Layer通过让权重矩阵与独热向量相乘可以将input_size的向量转化为embedding_size的向量。

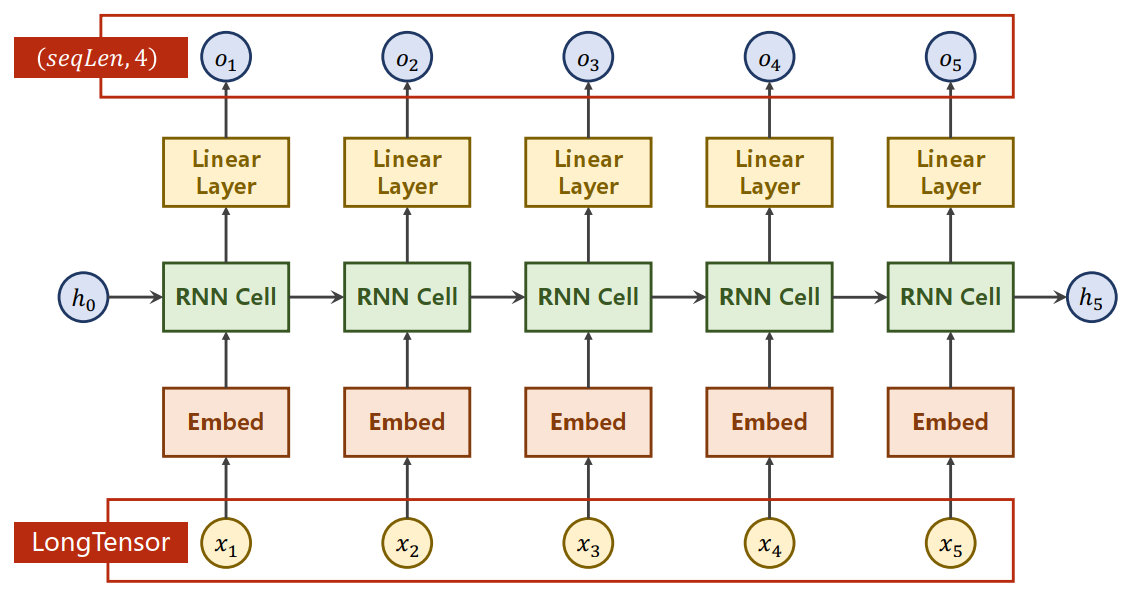

Using Embedding and Linear Layer

import torch

num_class = 4

input_size = 4

hidden_size = 8

embedding_size = 10

num_layers = 2

batch_size = 1

seq_len = 5

# Prepare Data

idx2char = ['e', 'h', 'l', 'o']

x_data = [[1, 0, 2, 2, 3]]

y_data = [3, 1, 2, 3, 2]

inputs = torch.LongTensor(x_data)

labels = torch.LongTensor(y_data)

# Design Model

class Model(torch.nn.Module):

def __init__(self):

super().__init__()

self.emb = torch.nn.Embedding(input_size, embedding_size)

self.rnn = torch.nn.RNN(input_size=embedding_size,

hidden_size=hidden_size,

num_layers=num_layers,

batch_first=True)

self.fc = torch.nn.Linear(hidden_size, num_class)

def forward(self, x):

hidden = torch.zeros(num_layers, x.size(0), hidden_size)

x = self.emb(x)

x, _ = self.rnn(x, hidden)

x = self.fc(x)

return x.view(-1, num_class)

net = Model()

# Loss and Optimizer

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(), lr=0.05)

# Training Cycle

for epoch in range(15):

optimizer.zero_grad()

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

_, idx = outputs.max(dim=1)

idx = idx.data.numpy()

print('Predicted: ', ''.join([idx2char[x] for x in idx]), end='')

print(', Epoch [%d/15] loss=%.4f' % (epoch + 1, loss.item()))运行结果: